Issues when Cross-building TFLite GPU Delegate and loading the built so file in Python · Issue #46498 · tensorflow/tensorflow · GitHub

Issues when Cross-building TFLite GPU Delegate and loading the built so file in Python · Issue #46498 · tensorflow/tensorflow · GitHub

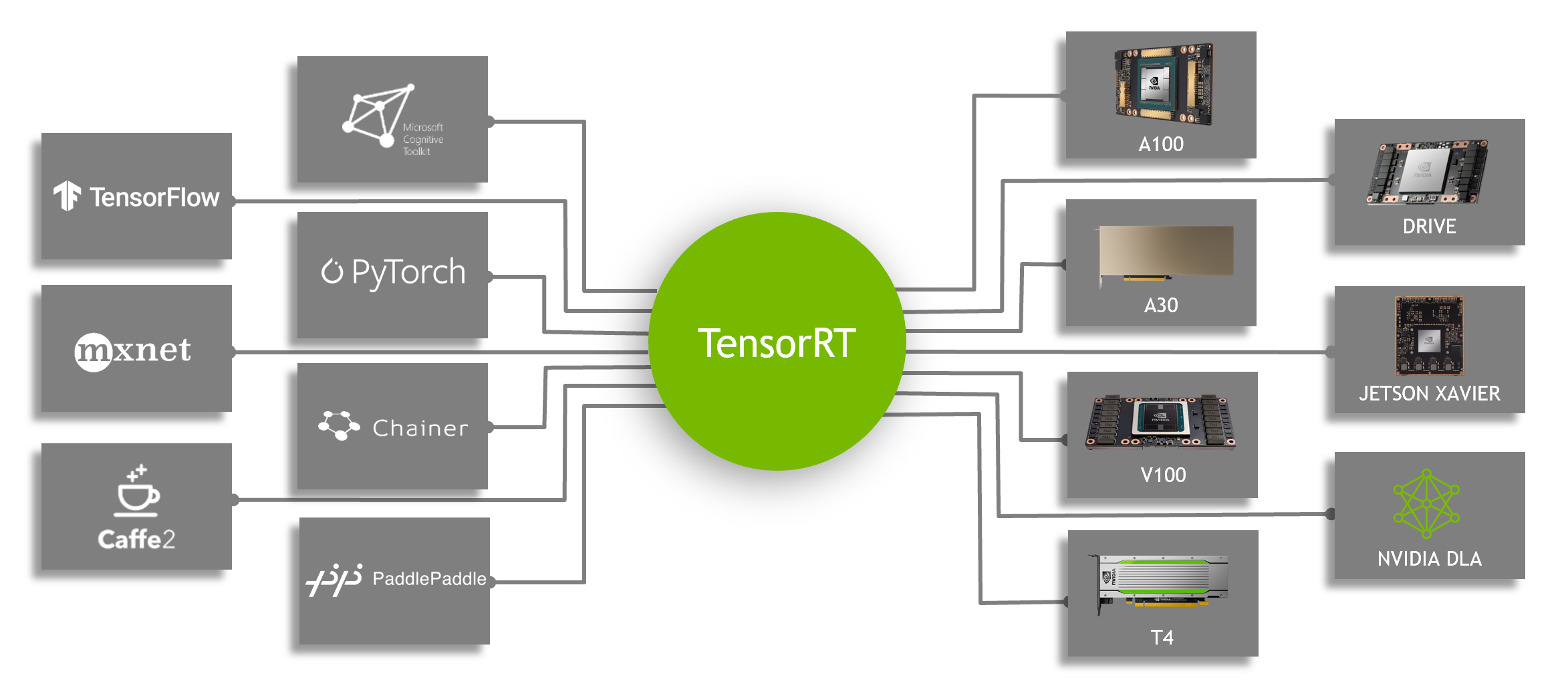

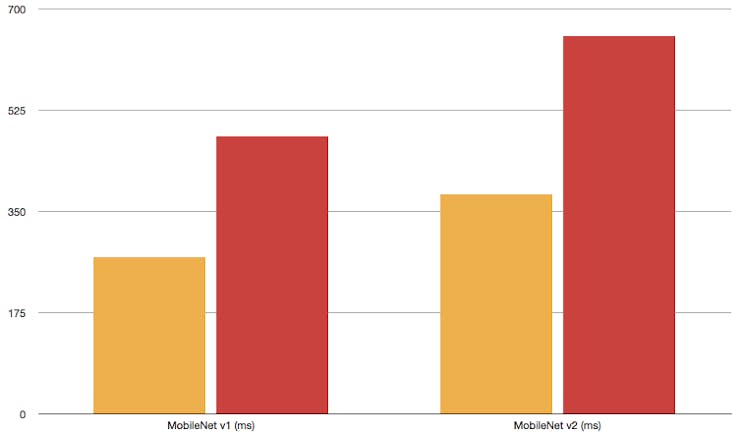

Applied Sciences | Free Full-Text | A Deep Learning Framework Performance Evaluation to Use YOLO in Nvidia Jetson Platform

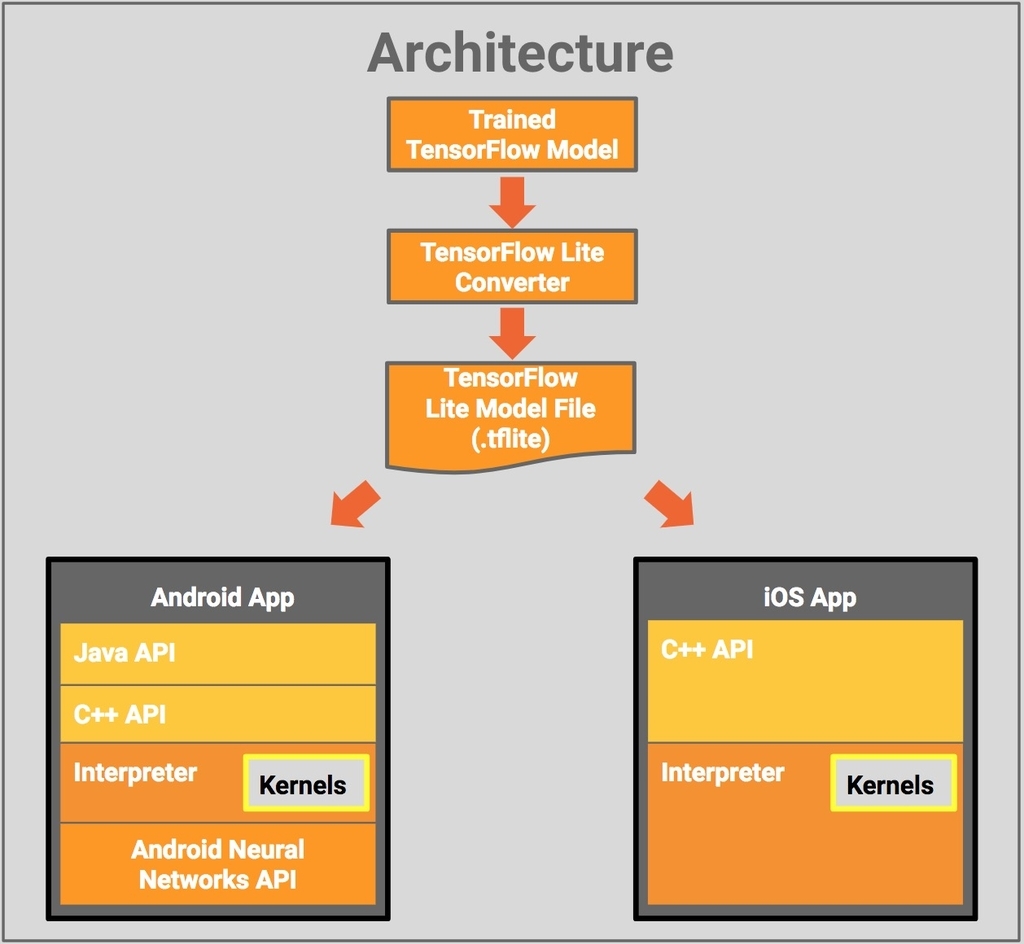

TensorFlow on Twitter: "🎉 The wait is over! TensorFlow 2.0 is finally here. Driven by community feedback, this release provides a complete set of tools for developers, enterprises, and researchers to easily